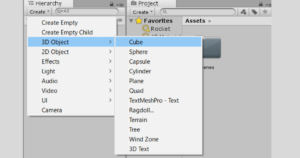

The background image will always be drawn behind other objects while a reference will show up in front. This will import the image in the same way but with different preferences set. Note that we also have a "Reference" option in the image submenu. Then browse for the image we want to import. We can reset the rotation with Alt+R.įrom there we can position the image using the regular transform tools, Rotate, Move and, scale.Īnother way is to press Shift+A in the 3D viewport and go to image→Background. When you do the image will align to the view. The easiest way to import an image as background is to drag-and-drop it from your file browser.

Suggested content: Artisticrender's E-Book How to import an image as a background image It has helped many people learn Blender faster and deepen their knowledge in this fantastic software. For example, if you had the intention to bring in an image as a background image and later need it as a reference image, you can select it from Blenders internal storage.īy the way, if you enjoy this article, I suggest that you look at my E-Book. Then we can select it from Blenders internal image storage. No matter how we import an image into Blender, once we imported it, it is available for us in all areas of Blender. We will look at each of the practical ways in this article. There are more ways that we can import an image into Blender. If you drop it into the shader editor, it will get added as an image texture node. If you drop it in the 3D viewport it will become a background image object.

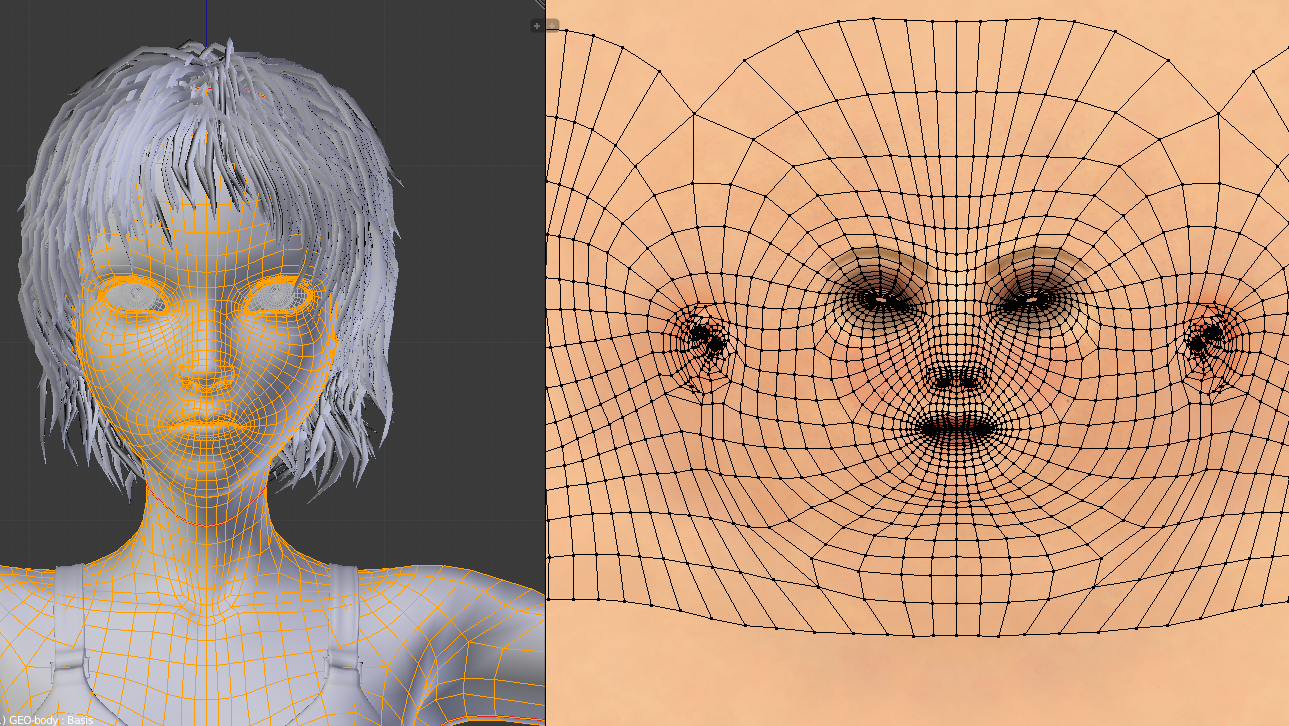

The most basic way to import an image into Blender is to drag-and-drop it. The best way depends on what we want to do with the image once we imported it. If you notice you’ve missed a part of the scene or object you’re capturing, simply return to that spot, or bring the camera closer and watch as all the details are captured.There are many ways to import images into Blender. This allows you to use a mesh preview feature that shows you the mesh Polycam is creating in real-time. To make the most out of Polycam, users who have an iPhone 12 (or later if you are seeing this post down the road) are able to use Polycam’s LiDAR capture feature. Not only will it create the mesh for you, but it will also generate textures as well. Once the app - and the user - are satisfied with the information collected, the program’s AI will take all of that data and generate a 3D model. The app takes the pictures for you, and it lets you know if you are moving too fast or too slow. While that may seem daunting, in practice it really isn’t.

While true, this process doesn’t work from a single image, there are two options available: LiDAR capture, or Photo Mode, which essentially takes hundreds of photos for you. There are a number of different ways you can go about doing this, so let’s dive in and talk about these different methods and programs!Īnother exciting technological advancement has come in the form of Polycam, an IOS app that simplifies the 3D photo scanning process. Working from photos is a win-win if you know where to start and have access to the right tools. If you are looking to achieve realism, photos are a great place to start for obvious reasons: they represent the perfect real-world proportions of the object, and you already have the source material to create the textures for the model as well. While advanced learning is invaluable, it is now easier than ever for anybody to hop into 3D and start making progress. There is also something to be said about lowering the barriers to entry in 3D. There are a number of reasons why this might intrigue you. And let’s not forget all the hobbyists and indie creators who can benefit from shortcuts that warrant fair results. Or more likely, you work hard creating lots of 3D models and are always looking for new technological trends to speed up your pipeline. Maybe you want to make a deep fake Instagram account and wow the world with the stunning accuracy you are able to achieve mimicking famous faces like, say, Tom Cruise.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed